Agentic AI went mainstream fast. What was once a niche concept confined to research papers and developer experiments has, in the space of a few months, become something millions of people are actively using — scheduling meetings, processing emails, writing and executing code, coordinating workflows across platforms.

The catalyst was OpenClaw, the open-source personal agent built by Peter Steinberger. By combining persistent memory, sandboxed code execution, tool access, and messaging platform integration, it made autonomous agents feel practical for the first time. Its growth was explosive, its acquisition by OpenAI in February 2026 unsurprising.

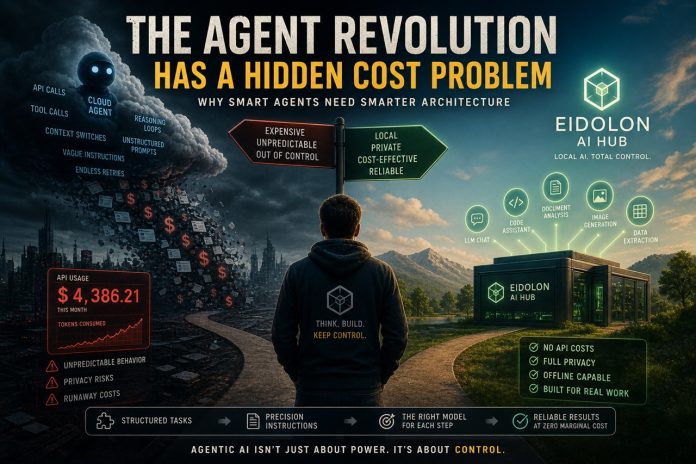

But beneath the excitement is a problem most users only discover the hard way: agentic AI on cloud infrastructure becomes expensive fast — and nearly everyone is using it wrong.

The API Cost Trap of Agentic AI

Cloud-based agents — whether built on OpenAI’s stack, Anthropic’s API, or Google’s Gemini — operate on a consumption model. Every prompt, every tool call, every reasoning step costs money. For a simple chatbot, this is manageable. For an agent running multi-step workflows, the math changes quickly.

Consider what happens when a user gives a generalist agent a broad instruction like “review my codebase and suggest improvements.” That single vague request can trigger dozens of sequential API calls: reading files, analyzing structure, cross-referencing patterns, generating suggestions, validating them, reformatting output. Each step consumes tokens. Each mistake — and vague instructions guarantee mistakes — consumes more tokens to fix.

The result is predictable: impressive demos, followed by bills that don’t match the value delivered.

This isn’t a flaw in agentic AI itself. It’s a consequence of how tasks are structured — or, more often, how they aren’t.

Generalist Prompts Are the Root Cause

The biggest mistake in agentic AI isn’t choosing the wrong model. It’s treating the agent like a magic wand and expecting vague, high-level instructions to produce precise, cost-effective results.

They don’t.

A generalist prompt doesn’t just reduce quality — it actively increases cost. It hands the agent a massive decision space with no constraints, no success criteria, and no defined output format. The model fills those gaps with assumptions. Sometimes those assumptions are reasonable. Often they’re not. Every wrong assumption triggers another loop through the API.

Developers who get consistent results — both in quality and cost efficiency — approach the problem differently. They decompose tasks aggressively and define behavior explicitly.

Instead of one agent doing everything, they build pipelines of narrow agents, each responsible for a single operation. Instead of conversational instructions, they use structured specification documents that define role, constraints, input format, expected output, and edge-case behavior.

In professional environments, these are often called Prompt Instruction Files (PIFs) — versioned, tested, and maintained like code. They turn an agent from an unpredictable system that “tries its best” into a controlled component with measurable behavior.

The difference in cost and reliability isn’t incremental. It’s often an order of magnitude.

Where Local Models Change the Equation

Once you accept that agentic AI works best with narrow tasks and precise instructions, a different question emerges: does every step in your pipeline really need a frontier cloud model?

In most cases, no.

Frontier models like GPT-4o or Claude are genuinely powerful for tasks that require broad reasoning, complex synthesis, or nuanced judgment. But most steps in a well-designed pipeline don’t require that level of capability. They require consistency, speed, and strict adherence to instructions.

This is where local models become relevant.

A well-configured 8B or 12B parameter model running locally, given precise PIF-style instructions for a narrow task, can match — and sometimes exceed — the reliability of a much larger cloud model on that task. Not because it’s more capable in general, but because the task has been designed to fit its strengths.

The tradeoff is real. Local models have lower information density and are not suited for broad, open-ended reasoning. But for most operations in a decomposed pipeline — classification, extraction, formatting, domain-specific generation, structured analysis — they perform extremely well.

And they do it with zero marginal cost per call, full data privacy, and no risk of runaway API bills caused by a poorly constrained agent loop.

Eidolon AI Hub: Local AI Built for This Approach

Eidolon AI Hub is a modular local AI platform built around this exact philosophy. It runs on consumer hardware — Windows, Linux, and macOS — with no cloud dependency, no subscription fees, and no data leaving your machine.

The platform is structured as a collection of specialized modules, each designed to do one thing well — and to be combined into controlled pipelines rather than forced into a single generalist system.

This matters. Because once you stop treating AI as a monolithic “assistant” and start treating it as a set of well-defined components, the entire cost and reliability equation changes.

For developers and professionals who want to integrate AI into real workflows — not just demos — Eidolon provides a practical foundation: it runs locally, it keeps data under control, and it aligns with the principle that well-specified, vertical tools outperform generalist systems in real-world use.

The platform is already in use by several hundred users through its Kickstarter release — people who wanted local AI that works, without having to build everything from scratch.

What’s Coming: A Vertical Coding Agent

The next major addition to the Eidolon ecosystem is a dedicated coding module — a vertical agentic system designed specifically for development workflows.

Instead of trying to replicate the “do everything” approach of generalist coding agents, it focuses on a narrow set of tasks: structured code analysis, targeted refactoring, documentation generation, and workflow automation.

All of it runs locally. All of it is driven by explicit instruction specifications rather than vague prompts.

The goal is not to compete with frontier cloud agents on breadth. It’s to provide something they often fail to deliver: reliability, predictability, and zero marginal cost for the tasks developers actually repeat every day.

More details soon.

The Practical Takeaway

Agentic AI is not hype. The ability to automate complex, multi-step workflows is genuinely transformative.

But it doesn’t work by default. It works when:

- Tasks are decomposed into narrow, well-defined steps

- Instructions are structured and precise, not improvisational

- The right model is matched to each task — including local models where appropriate

- Costs and behaviors are actively controlled

Developers who understand this early will build systems that actually work.

The rest will keep paying for systems that don’t.

Eidolon AI Hub is a local AI platform developed by Blacknode LTD (UK). Learn more at eidolonhub.com.